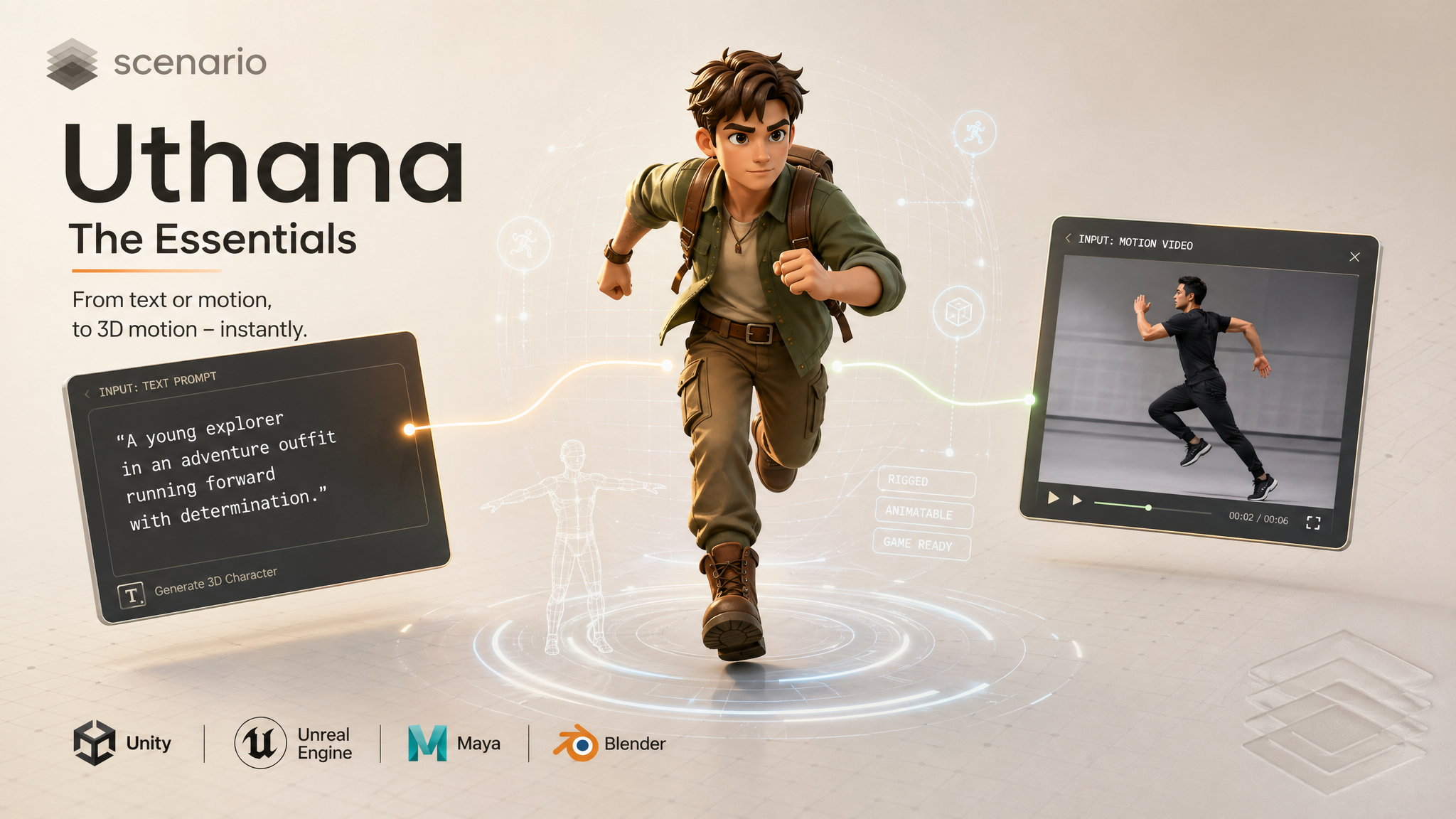

Uthana: The Essentials

Last updated: May 5, 2026

Uthana brings three linked tools to Scenario. Text-to-Motion generates 3D character animation from a text description. Video-to-Motion converts reference footage into a 3D animation file. Character Rigging takes a static humanoid mesh you already have (for example from another 3D generator or sculpt) and returns the same mesh with a skeleton inside it, as GLB or FBX, so you can animate or retarget in Unity, Unreal, Maya, or Blender.

Overview

Uthana's motion models are built on foundation training from human motion capture. They produce biomechanically realistic, anatomically sound human motion. Locomotion, combat, gestures, athletics, and character performance are all well within range for Text-to-Motion and Video-to-Motion. Output is a rigged animation file you can retarget to any biped character, or apply directly to your own mesh if you upload it during generation.

Character Rigging solves a different problem: your mesh exists but has no bones yet. It does not read prompts or video. It reads geometry only, then writes a skeleton that matches a biped layout. Use it before motion when your pipeline has a naked mesh, or when a high-poly export from another tool needs a first pass rig before motion.

Which tool when: Use Text-to-Motion when you want to describe what a character does and have the model generate it. Use Video-to-Motion when you have footage of a real action and want to convert it to 3D animation without re-describing it in words. Use Character Rigging when you have a humanoid GLB or FBX and need a skeleton placed automatically.

Uthana Text-to-Motion

With Uthana Text-to-Motion you only need to describe the motion you want in plain language and the model generates a 3D animation clip from it. The prompt is everything: what the body does, how it moves, and the emotional quality of the action. Optionally upload your own character mesh and the motion is retargeted to your skeleton automatically.

Writing a good prompt

The more specific you are about what the body actually does, the better the result. Describe actions and limb movement rather than narrative context.

A prompt like "a person walks" works, but "a tired person walks slowly, shoulders slumped, each step dragging slightly" gives the model much more to work with. Mention which limbs are involved, the pace, and the energy level of the motion. Emotional descriptors like confident, cautious, exhausted, or aggressive translate directly into how the character moves.

Avoid describing appearance or story: "a warrior prepares to fight" is vague. "A warrior plants their feet wide, raises a sword with both hands, and leans forward into a ready stance" is a motion description the model can actually use.

Settings that matter

Duration: Set this to match the action. A single gesture or pose can be 1 to 2 seconds. A walk cycle or combat sequence needs 4 to 6 seconds or more. The default is 5 seconds.

Foot IK: Turn this on when the character needs to plant their feet on the ground, like walking, running, or a landing. Leave it off for aerial or floating motions.

Steps and CFG scale: These control generation quality and prompt adherence. The defaults (50 steps, CFG 2) work well for most cases. Increase steps toward 80 to 100 for production-quality output. Keep CFG between 2 and 4 for natural-looking motion.

Examples

Walk cycle for a game character: "A person walks forward at a relaxed pace, arms swinging naturally at their sides, head level." Enable foot IK, set duration to 3 seconds for one full gait cycle.

Combat idle: "A fighter stands in a low defensive stance, weight shifting slightly from foot to foot, fists raised, eyes forward." Duration 4 seconds, foot IK on.

Victory celebration: "A person jumps with both arms raised above their head, lands, and pumps one fist in the air with excitement." Duration 3 seconds.

Tired character entering a room: "A person pushes open a door slowly, steps through, pauses, and leans against the wall with a long exhale, head dropping forward." Duration 6 seconds.

Uthana Video-to-Motion

With Uthana Video-to-Motion, you need to upload a video of a person performing a motion and the model extracts that movement as a 3D animation file. There is no prompt. The motion comes directly from what is visible in the footage. Like Text-to-Motion, you can upload your own character mesh to have the extracted motion retargeted to your skeleton.

What makes a good input video

The output quality depends entirely on the input video. A few things matter most:

Full body in frame: The whole subject, head to toe, should be visible. Cropped legs or arms means the model cannot track those joints.

One person, clear background: Multiple people in frame confuse the tracker. A plain or contrasting background helps the model isolate the subject.

Stable camera: A static or slowly moving camera gives the cleanest tracking. Fast camera movement introduces artifacts in the output animation.

Good lighting: The subject needs to be clearly lit and distinguishable from the background. Heavy shadows or silhouettes reduce accuracy.

Trim to the motion you want: Upload only the segment you need. Idle frames before or after the action appear in the output clip.

Examples

Capture a specific jump from a sports video: Trim the clip to the jump only, ensure the athlete is fully in frame, upload. The extracted animation can be retargeted to any game character.

Record a custom gesture with a phone: Stand in front of a plain wall in good light, record the gesture at normal speed, trim to the action, upload. Good for custom idles, emotes, or interactions that would be hard to describe in text.

Previs from actor reference: Film a director or actor blocking a scene, upload the footage, and use the extracted motion as a 3D previs base to evaluate timing and staging.

Uthana Character Rigging

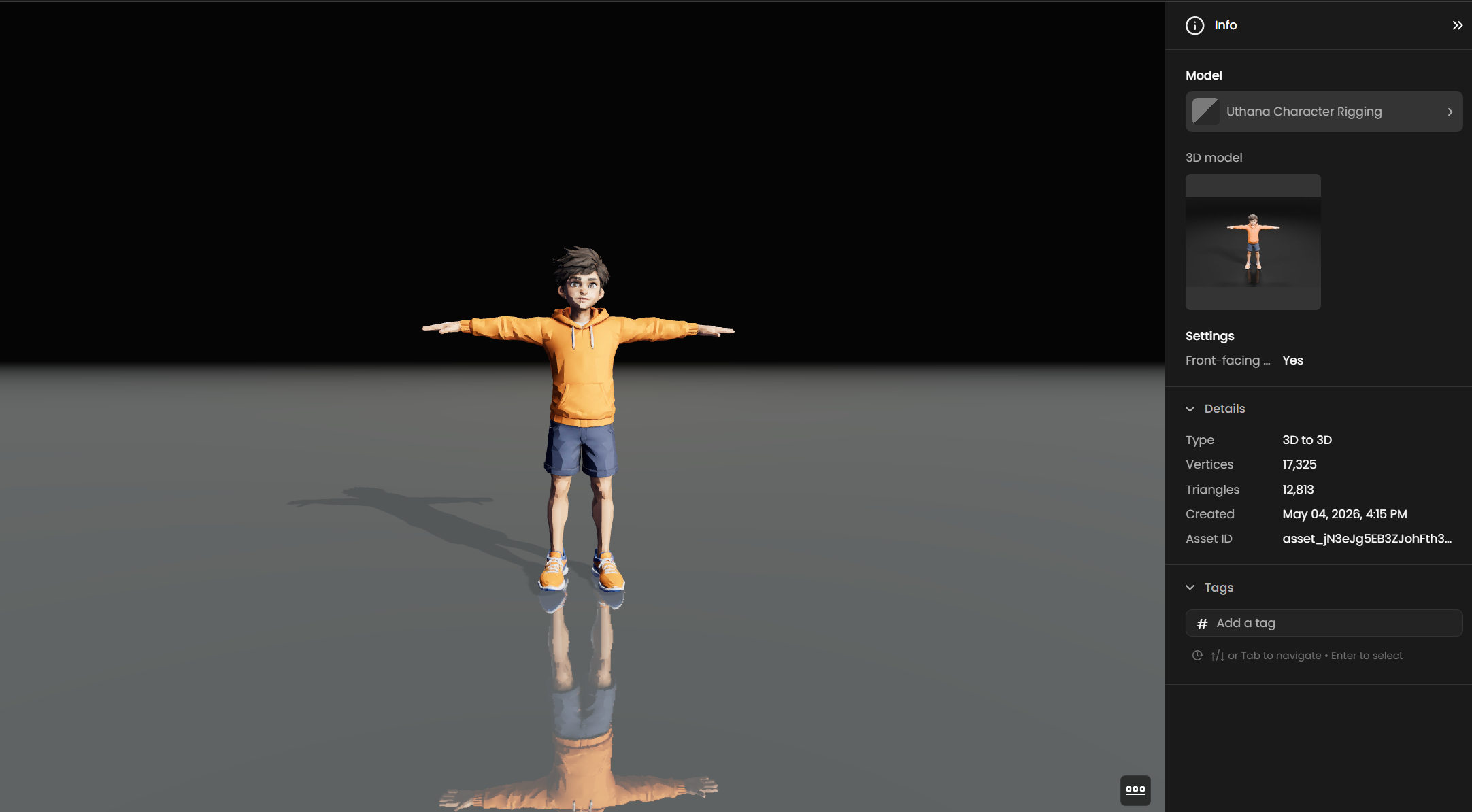

Uthana Character Rigging adds a skeleton to a biped humanoid mesh you upload. There is no prompt and no video. The model looks at your geometry and places bones so you can animate or send the file to Text-to-Motion or Video-to-Motion with the same character slot.

Important: Text-to-Motion and Video-to-Motion already auto-rig your character when you upload a mesh on those screens: motion and rigging happen in one job. Character Rigging is the path when you want rig output only first (inspect bones, fix in Blender, reuse the same rig across several motion tests, or keep motion and mesh prep as separate steps). Both approaches are valid on Scenario.

Hard limit in Scenario: each upload must be 30 MB or smaller. The provider rejects larger files with an explicit size error. High-resolution outputs from image-to-3D tools often exceed this until you decimate or re-export. Plan a quick size check before batching.

What you upload

Formats: OBJ, GLB or FBX, as accepted in the Scenario picker.

Pose: Use a T-pose or A-pose with feet on the ground so shoulders, hips, and spine read clearly to the auto-rigger.

Topology: Humanoid proportions work best. Quadrupeds, creatures with extra limbs as arms, or props welded as limbs are outside what this pass targets.

Controls in Scenario

Front-facing model: Leave this on when the mesh faces the camera like a sheet turntable. Turn it off if the bind pose is rotated and you want the solver to interpret that layout.

Export format: Choose GLB or FBX to match the next stop in your pipeline.

How it fits the motion tools

Rigging does not create motion by itself. It prepares the mesh so Text-to-Motion and Video-to-Motion can drive it cleanly. Typical flow: Character Rigging on your mesh, then run motion with that same character file attached so retargeting happens at generation time.

Examples

Mesh from Tripo or Hunyuan: Export a lighter copy under 30 MB, run Character Rigging, then attach the rigged asset to Text-to-Motion for a walk line you already trust.

Class or jam asset: Students deliver rough humanoids. Rigging first gives everyone the same skeleton layout before animation homework.

Blocked hero: Art locks a sculpt export. You rig once, validate shoulder line in your DCC, then feed the same file into motion passes without redoing joints.

Character Retargeting

Text-to-Motion and Video-to-Motion both support uploading your own character mesh. When you provide a character file on those models, Uthana auto-rigs it there and applies the generated or extracted motion to your skeleton.

Character Rigging is the same auto-rig quality path, but exposed as its own step: mesh in, rigged mesh out, with no prompt or video. Use it when you want the skeleton first, then motion later (or motion on the motion models with a mesh you already know is rigged). You can still upload either the raw mesh or the rigged mesh on Text-to-Motion or Video-to-Motion; the motion tools will rig on the fly if needed.

Tips for Better Results

For Text-to-Motion: describe motion, not story. Every word in the prompt should describe something the body physically does. Cut context, setting, and narrative, and focus entirely on movement.

For Text-to-Motion: use a seed when iterating on quality settings. Fix the seed so you can compare the effect of changing steps or CFG without random variation introducing noise into your comparison.

For Video-to-Motion: trim before uploading. The model captures everything in the video. Any idle, setup, or unintended motion appears in the output. Trim as precisely as possible.

For both: upload your character file when you know the target. Retargeting at generation time is faster and cleaner than doing it manually after the fact. Upload the mesh you intend to use and the output arrives already fitted to your rig.

For both: start with a short test clip before committing to a long generation. Run 3 to 5 seconds first to verify the motion concept works, then re-run at the final duration you need.

Known Limitations

Human motion only. Both models are trained on human motion capture. Non-human creatures, quadrupeds, and highly stylized or mechanical motion are not within the intended use case and may produce poor results.

Maximum clip length is 10 seconds. Longer sequences need to be assembled from multiple clips in your DCC.

Video-to-Motion has no quality controls. Unlike Text-to-Motion, there are no steps or guidance parameters. Output quality is determined entirely by the input video. Improving the result means improving the input.

Fine details need cleanup. Complex hair, loose clothing, and partially occluded limbs are challenging for both models. Expect to do some cleanup in a DCC for production assets.

Use Cases

Game development: Generate locomotion, combat, idle, and interaction animations without a mocap session. Use Text-to-Motion for common actions, Video-to-Motion to capture specific movements from reference footage.

Film and previs: Block out character performance and staging from a text description, or extract timing from on-set actor reference, before committing to keyframe animation or full mocap.

Virtual humans and avatars: Build motion libraries for social platform avatars, virtual presenters, or interactive characters quickly from prompts or reference clips.

Rapid prototyping: Validate animation concepts, camera angles, and character blocking early in production without needing an animator for every iteration.