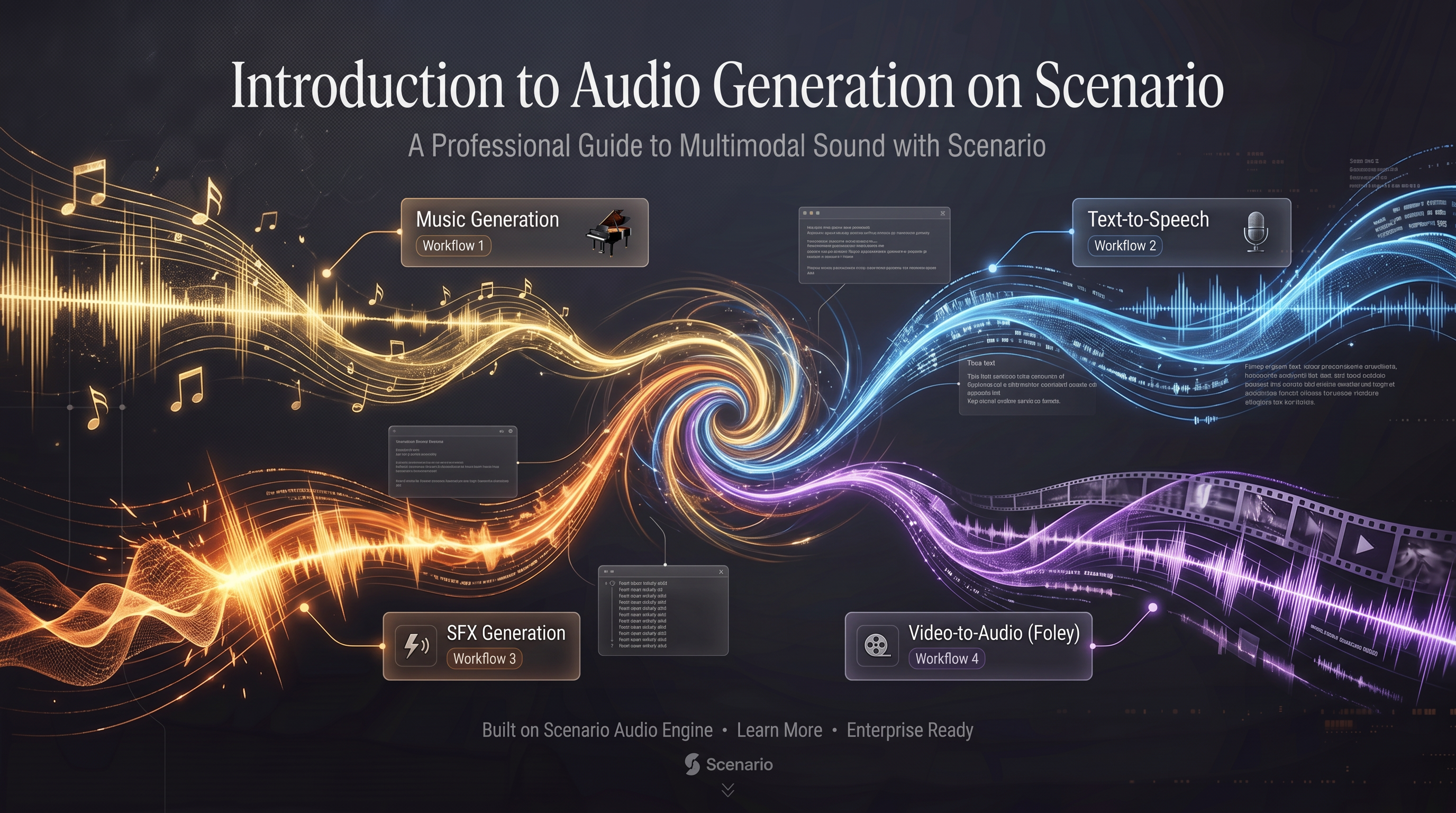

Introduction to Audio Generation on Scenario

Last updated: April 20, 2026

Scenario provides a full suite of audio generation models spanning four categories: music, text-to-speech, sound effects, and video-to-audio. This article is a quick-reference guide to help you find the right model for your use case. Each model listed below links to a dedicated article with full parameter documentation, tips, and examples.

Music Generation

Music models generate original audio tracks from text descriptions. They range from quick 30-second clips to full structured songs up to 5 minutes in length.

ModelID | Output length | Key differentiator | |

| 2:30 to 4:45 min | Full songs with vocals. Instrumental mode, auto-lyrics, and 14+ structure tags. Highest-quality output. | |

| 2:30 to 4:45 min | Full songs. Lyrics required. Same structure tag system as 2.6. Use if manual lyrics control is preferred. | |

| Up to 3 min | Section-by-section composition with per-section styles and narrative lines. Best for structured cinematic or game music. | |

| 3 sec to 3 min | Single prompt, fast output. Reliable instrumental mode. Opus 192kbps max quality. | |

| Up to ~2 min, MP3 or WAV | Full songs from text or image references. Verse/chorus structure tags. SynthID watermarked. | |

| 30 seconds, MP3 | Quick 30-second music clips. Accepts image references. SynthID watermarked. | |

| 5 to 150 seconds | Royalty-free instrumental music. Negative prompt, refinement, and creativity sliders. | |

| Up to 30 seconds | Melody-conditioned generation. Can continue or transform a reference audio clip. | |

| 30 seconds, 48kHz stereo | Legacy model. Instrumental only. Only Lyria model with negativePrompt support. |

Quick decision guide: For full songs with vocals, use MiniMax Music 2.6. For structured cinematic compositions, use ElevenLabs Music Advanced. For quick instrumental clips, use ElevenLabs Music or Lyria 3 Clip. For royalty-free background music with quality controls, use Beatoven.

Text-to-Speech

TTS models convert written text into spoken audio. They vary in language support, voice selection, emotional range, and latency.

ModelID | Voices / Languages | Key differentiator | |

| 30 voices, 24 languages | Inline audio tags for emotion control ([determination], [whispers]). Multi-speaker dialogue. Up to 5,000 characters. | |

| 17 voices, 40+ languages | Highest quality TTS. Pause syntax, interjections, 10 emotions. Broadcast-ready output. | |

| 17 voices, 40+ languages | Same as HD, optimized for speed and cost. Best for real-time and high-volume pipelines. | |

| Custom voices, 70+ languages | Most expressive ElevenLabs model. Alpha release, non-real-time. 3,000 character limit. | |

| Custom voices, 29 languages | Emotionally rich speech. Production-ready. 10,000 character limit. | |

| Custom voices, 32 languages | Low-latency ElevenLabs. Cost-effective for real-time applications. | |

| Multiple voices, multilingual | Expressive delivery with speech tags like [pause] and whisper mode. Codec and language options. | |

| Multiple voices | Previous Gemini TTS generation. Migrate to Gemini 3.1 Flash for new projects. | |

| Multiple voices | Previous Gemini TTS generation. Migrate to Gemini 3.1 Flash for new projects. | |

| Reference audio cloning | Voice cloning from a short audio reference. 3B parameter model, higher quality than 1B. | |

| Reference audio cloning | Voice cloning from a short audio reference. Faster, lighter than 3B. | |

| Reference audio cloning | High-fidelity voice cloning at 48kHz from a reference audio clip. |

Quick decision guide: For general speech with emotion control, use Gemini 3.1 Flash TTS. For broadcast-quality narration in 40+ languages, use MiniMax Speech 2.8 HD. For voice cloning from a reference clip, use Lux TTS or Tada 3B. For real-time applications, use MiniMax 2.8 Turbo or ElevenLabs Turbo 2.5.

Sound Effects

SFX models generate short audio clips from text descriptions, without requiring a video input.

ModelID | Max duration | Key differentiator | |

| 30 seconds | Precise sound effects with guidance control and loop toggle. Commercially licensed. | |

| 35 seconds | Royalty-free SFX with refinement and creativity sliders. Negative prompt support. | |

| 30 seconds | Academic model (MIT license). cfgStrength control. Pairs with MM Audio 2 for video-to-audio workflows. |

Video-to-Audio

Video-to-audio models analyze a silent video and generate synchronized audio that matches the on-screen action. They combine visual analysis with a text prompt to produce temporally aligned Foley and ambient sound.

ModelID | Max duration | Key differentiator | |

| 30 seconds | More precise temporal alignment than MM Audio. Default 8s output, up to 30s. | |

| 30 seconds | Original MM Audio model. Default 30s output. MIT license. |

Choosing the Right Model

I need to... | Start with |

Generate a full song with vocals and arrangement | MiniMax Music 2.6 |

Compose music section-by-section for a game or film | ElevenLabs Music Advanced |

Generate a quick instrumental background track | ElevenLabs Music or Beatoven Music Generation |

Generate a 30-second music clip | Google Lyria 3 Clip |

Narrate a script in multiple languages | MiniMax Speech 2.8 HD or Gemini 3.1 Flash TTS |

Clone a specific voice from a recording | Lux TTS or Tada 3B TTS |

Build a real-time voice assistant | MiniMax Speech 2.8 Turbo or ElevenLabs Turbo 2.5 |

Generate sound effects for a game | ElevenLabs Sound Effects 2 or Beatoven Sound Effect |

Add synchronized audio to a silent video | MM Audio 2 |

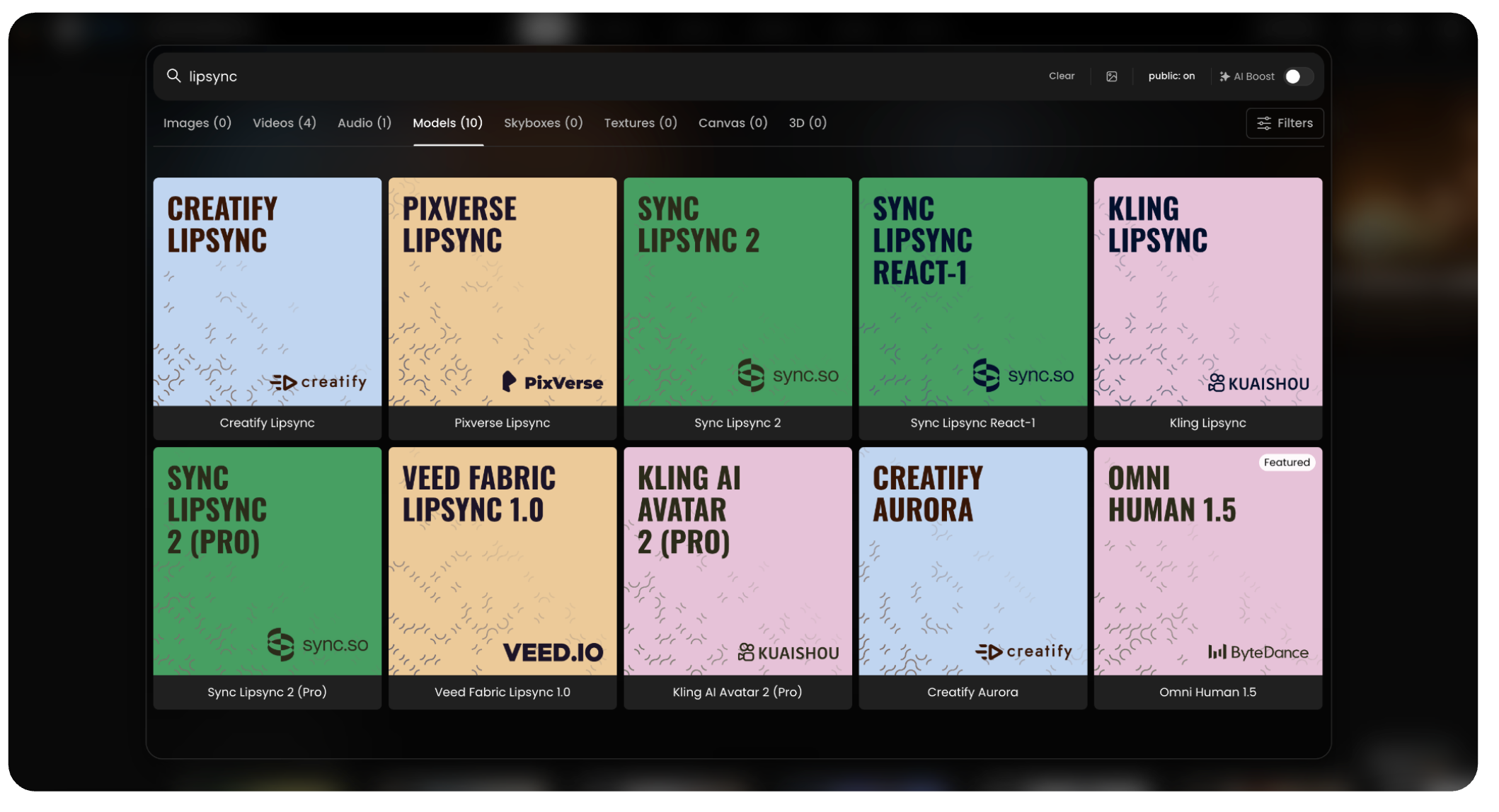

Lip Sync Animation: Bringing Characters to Life

And finally, lip sync animation, found in the “Video generation” tools. Just open the main Video generation interface, and select from the dedicated Lip Sync models to get started.

You can choose from a wide variety of specialized models, ranging from high-fidelity avatars like Omni Human 1.5 and Kling AI Avatar 2, to specialized tools for stylized motion or fast clips. Each model offers a different look, feel, or level of control to suit your specific project needs.

All lip-sync models on Scenario are covered in a dedicated section of the Knowledge Base, available here: https://help.scenario.com/en/c/lip-sync. This section includes both high-level overviews and in-depth documentation for specific models.

AI lip sync technology allows the synchronization of a character’s or person’s lip movements with an audio track or written text converted into speech. Depending on the model, you can upload an image or video together with audio, or simply provide text to generate both the voice and the synchronized lip movement.

Available Models:

Omni Human 1.5: (ByteDance) Generates digital humans from a single image and audio file, creating high-quality avatars with natural gestures.

Creatify Aurora: A specialized model by Creatify designed for creating dynamic, lifelike character animations.

Sync Lipsync React-1: Tailored for reactive video generation, syncing lip movements perfectly for reaction-style content.

Kling AI Avatar 2 (Pro): The advanced professional version of the Kling avatar model, offering higher fidelity and enhanced control.

Veed Fabric Lipsync 1.0: This model provides seamless lip synchronization integrated with Veed's video fabric technology.

Sync Lipsync 2 (Pro): A professional-grade “zero-shot” model that aligns video with new audio while preserving the original speaking style with high precision.

Pixverse Lipsync: Part of a broader video generation ecosystem, offering lip sync combined with visual effects and cinematic quality.

Creatify Lipsync: A tool for creating looped lip sync animations, with fast generation for short, lightweight videos.

Sync Lipsync 2: The standard “zero-shot” model that aligns any video with new audio, featuring multi-speaker support.

Kling Lipsync: Focused on short, fast lip sync generation from uploaded video and audio or text, with adjustable voice speed and type.

A Complete Solution for Creative Audio Workflows

No matter what you're creating, voiceovers, music, sound effects, or animated dialogue, Scenario’s audio tools are built for speed and flexibility, providing a complete solution for creative audio workflows. From expressive speech and cinematic sound to stylized performance and lip sync animation, everything works together to give you full control from the first prompt to the final frame.