GPT Image 2 - The Essentials

Last updated: June 2, 2026

Covers GPT Image 2 (model_openai-gpt-image-2)

Introduction

GPT Image 2 is OpenAI's flagship image generation and editing model, now available on Scenario. It combines state-of-the-art text-to-image generation with powerful image editing capabilities, supporting up to 10 reference images, inpainting via alpha masks, and output resolutions up to 3840x3840 pixels.

Overview

GPT Image 2 is designed for workflows that require both creative generation and precise editing. Unlike prompt-only models, it accepts reference images as direct inputs, enabling style transfer, character consistency, and targeted region editing within a single generation call.

The model uses a built-in reasoning pass before generating, which improves prompt adherence on complex scenes, multi-object compositions, and text-in-image requests. It is well suited for production use cases across games, marketing, product visualization, and concept development.

On Scenario, GPT Image 2 is available as a third-party model. All generation jobs are billed based on resolution and quality setting. No fine-tuning or LoRA training is supported for this model.

What It Does

Text to image (txt2img): Generate images from a written prompt. Supports complex scenes, multi-subject compositions, and text rendered inside the image.

Image editing with references (img2img): Provide up to 10 reference images to guide the output. The model uses these to match style, character appearance, object shape, or composition.

Inpainting with masks: Supply a reference image and an alpha-masked image to target a specific region for editing while leaving the rest of the image unchanged.

Key Features

Up to 10 reference images per generation for context-guided editing

Alpha-mask inpainting for surgical, region-specific edits

Flexible output resolution: 16px to 3840px per edge, in multiples of 16

Aspect ratios up to 3:1 (for example, 3840x1280 or 1280x3840)

Four quality presets: auto, low, medium, high

Generate up to 10 images per request with the Image Count parameter

Strong text rendering inside images, including multilingual content and infographics

Opaque or auto background modes (transparent background is not supported)

Parameters

Here is a quick rundown of what each setting does and how to get the most out of it.

Prompt

This is the only required field. Write a description of the image you want to generate or edit. The model reads the full prompt and reasons over it before generating, so longer and more detailed prompts generally produce better results. You can use up to 32,000 characters. A good structure to follow is: scene or setting first, then the main subject, then stylistic details, then any constraints or exclusions.

Reference Images

You can upload up to 10 images to guide the generation. These can be a character you want to keep consistent, a visual style you want to match, an object you want to place in a new scene, or the base image you want to edit. When you use more than one reference image, mention each one by number in your prompt so the model knows how to use them. For example: "Image 1 is the product. Image 2 is the background scene. Place the product from Image 1 into the environment of Image 2."

Mask

The mask is used for inpainting, which means editing only a specific area of an image while leaving everything else untouched. It must be the same file format and exactly the same pixel dimensions as your reference image, and it must have an alpha channel. The area you paint as transparent (alpha 0) is what the model will regenerate. Everything that is opaque (alpha 255) stays as is. When exporting from Photoshop or GIMP, make sure to save with transparency preserved, otherwise the mask will not work.

Image Count

You can generate up to 10 images in a single request. The default is 1. Generating multiple images at once is a good way to explore different interpretations of the same prompt without running separate jobs. A count of 4 is a practical starting point when you want to compare directions.

Width and Height

Both values must be multiples of 16 and can go up to 3840px per edge. The aspect ratio cannot exceed 3:1. The easiest approach is to use one of the built-in resolution presets in the UI, which are already sized correctly. If you set a custom size, keep in mind that outputs above 2560x1440 are treated as experimental and quality may vary more than at standard sizes.

Quality

There are four levels: low, medium, high, and auto. Low is fast and works well for drafts and ideation. Medium covers most production needs. High is the right choice when your image includes small text, a detailed face, a dense infographic, or anything going directly into a final deliverable. Auto lets the model decide based on the complexity of the prompt.

Background

This setting controls whether the model generates an opaque background or makes that decision automatically. Transparent backgrounds are not supported by GPT Image 2. If your workflow requires a transparent output, the recommended approach is to generate with an opaque white or neutral background and then remove it using a background removal model as a second step.

Supported Resolution Presets

The Scenario UI includes the following presets, which cover the most common formats. You can also enter custom dimensions as long as they follow the constraints above.

9:16 Portrait (2160x3840): Mobile wallpapers, vertical social content.

2:3 Portrait (1024x1536): Trading cards, book covers, character sheets.

1:1 Standard (1024x1024): Social media posts, icons, product shots.

1:1 Large (2048x2048): High-resolution square assets, seamless textures.

3:2 Landscape (1536x1024): Environment art, banners, thumbnails.

16:9 HD (2048x1152): Game backgrounds, presentation slides, cinematics.

16:9 4K (3840x2160): Hero images, large-format print, ultra-HD assets.

Keep in mind that both dimensions must be multiples of 16, the maximum edge is 3840px, the aspect ratio cannot exceed 3:1, and outputs above 2560x1440 are experimental.

Inpainting with Masking Guides

Inpainting allows you to edit a specific region of an image while keeping the rest unchanged. To define this region, you can use a black-and-white mask image, where the white shape marks the area you want to change or fill. It is important to know that this mask serves only as a visual reference guide for positioning and scale—the final generation is not an exact match to the mask's precise boundaries.

Production Workflows

Copy these patterns verbatim, then swap asset numbers to match your uploads.

1. Environment kitbash

Prompt: Image 1 is the layout sketch. Image 2 is the material mood board. Build a single 16:9 environment concept with readable silhouettes, physically plausible scale, and color grading that matches Image 2. No characters.

2. SKU-accurate product mockup

Prompt: Image 1 is the packshot. Place it on a marble countertop with soft window light. Add subtle reflections that respect the real geometry from Image 1. Leave 10 percent margin for legal copy.

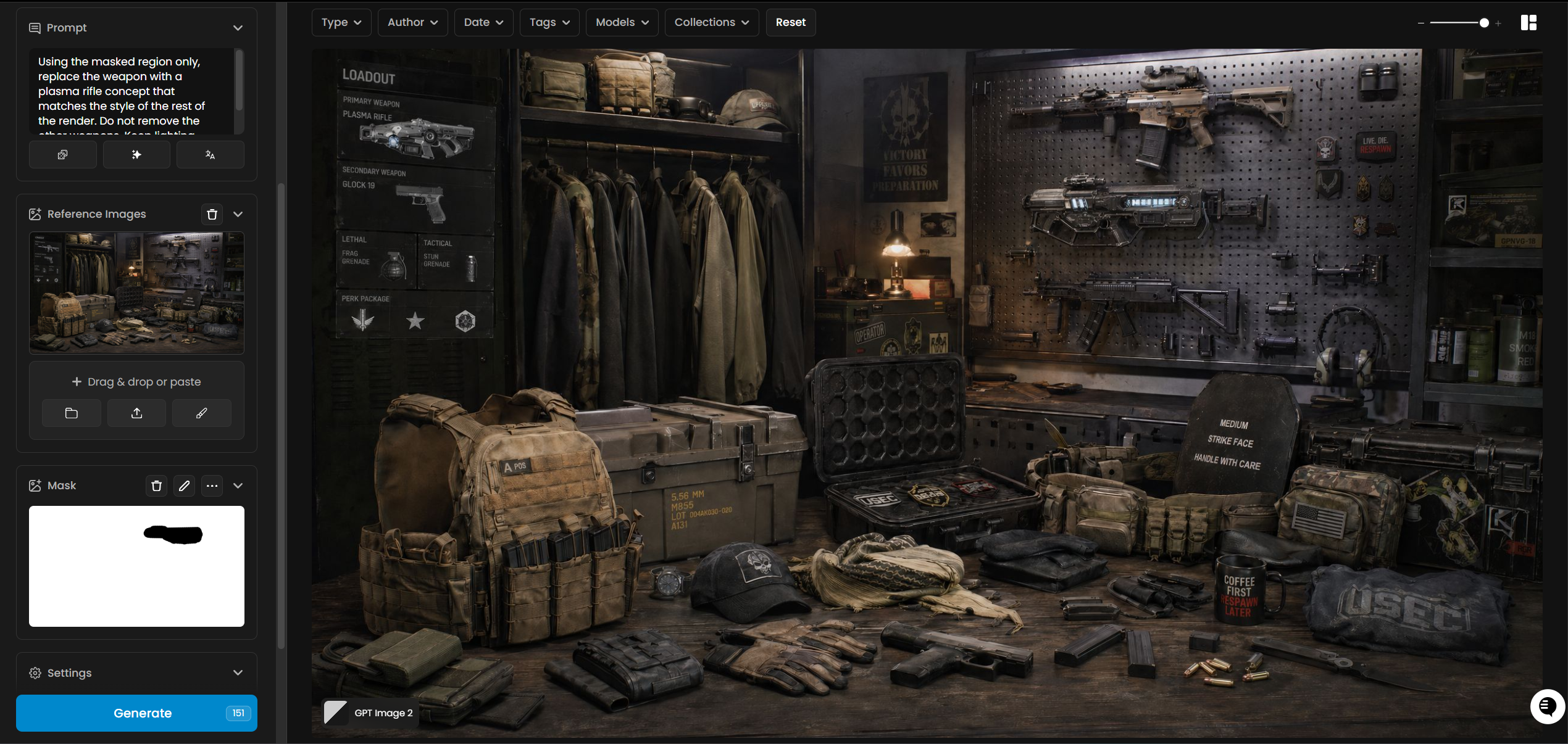

3. Game asset inpaint

Prompt: Using the masked region only, replace the weapon with a plasma rifle concept that matches the style of the rest of the render. Do not remove the other weapons. Keep lighting unchanged.

Reference Roles Cheat Sheet

Role | When to use | Example prompt fragment |

Identity | Face, body, wardrobe lock |

|

Style | Brushwork, film stock, palette |

|

Object | Hero prop or product |

|

Environment | Set, architecture, biome |

|

GPT Image 1.5 vs GPT Image 2

Reasoning depth: GPT Image 2 runs a stronger planning pass before pixels land, which helps dense scenes, multi-subject blocking, and long prompts.

Reference capacity: GPT Image 2 supports richer many-image conditioning (up to 10 references) for comp work; GPT Image 1.5 is lighter for quick iterations.

Text in image: GPT Image 2 is the safer default for posters, UI mocks, and packaging proofs; still proofread output.

When to stay on 1.5: Use GPT Image 1.5 when you need the fastest turnaround on simple subjects and do not need maximum reference fidelity.

Character Consistency Playbook

Upload the same turnaround every time you extend a storyline.

Lock seed once a hero frame looks correct, then iterate prompts in small deltas.

Mirror critical traits in text (hair length, costume seams, prop silhouette) even when references exist.

For comic or serial content, generate a master neutral pose, then reuse it as Image 1 for each new scene.

Tips for Better Results

Structure your prompt clearly. Start with the scene or setting, then describe the subject, then add stylistic or technical details. For example: "A sunlit forest clearing at golden hour. A lone fox sits on a mossy rock. Photorealistic, shot on a 85mm lens, shallow depth of field."

Use quality: low for drafts, quality: high for finals. Low quality is fast and sufficient for concept exploration. Switch to medium or high when working with small text, detailed faces, infographics, or final deliverables.

For text inside images, use quotes or ALL CAPS. Spell out difficult words or brand names letter-by-letter in your prompt. Use medium or high quality for reliable text rendering.

For photorealism, say so explicitly. Include "photorealistic," "real photograph," or "shot on a camera" in your prompt. This activates the model's photorealistic rendering mode.

Use Image Count 4 when exploring options. Generating multiple outputs at once is faster and cheaper than running separate jobs, and gives you more directions to compare.

When editing with references, label each image in the prompt. Write "Image 1 is the character reference. Image 2 is the environment. Place the character from Image 1 into the environment of Image 2." This reduces ambiguity.

State what should NOT change. If you are editing part of an image, explicitly tell the model to preserve the rest: "Change only the background. Keep the subject, lighting on the subject, and camera angle exactly the same."

For character consistency across multiple generations, re-upload the character reference image on each subsequent generation and repeat the key descriptors (hair, outfit, proportions) in the prompt to prevent drift.

Known Limitations

No transparent background support. The model does not output images with transparent backgrounds. Use the Background: opaque setting and remove the background in a separate step with a background removal model.

Complex prompts can take up to 2 minutes. High-detail prompts at large resolutions may have longer generation times. This is expected behavior, not an error.

Resolutions above 2560x1440 are experimental. Quality and consistency may be more variable at 4K resolutions. Test at 2K first and upscale if needed.

Mask format must exactly match the reference image. The mask and reference image must use the same format and identical pixel dimensions. Mismatches will cause the request to fail.

Character consistency requires repetition. The model does not retain context between separate generation sessions. Re-supply reference images and repeat character descriptors on every new generation to maintain consistency.

Text rendering is improved but not perfect. For very dense text, complex layouts, or non-Latin scripts, use quality: high and inspect the output before use in production.

Use Cases

Games: Generate character concept art, environment backgrounds, item icons, and UI elements. Example prompt:

Image 1 is the clan armor concept. Build an isometric squad shot with three variants, 2048x1152, hand-painted textures, no text.Use reference images to maintain consistency across a character's appearance in multiple scenes.Marketing and advertising: Create product mockups, lifestyle visuals, and ad creatives. Use inpainting to swap backgrounds or update product colorways without regenerating the full image.

Film and pre-production: Produce storyboard frames, mood boards, and set design references. Use multiple reference images to blend lighting style, costume, and environment into a single coherent output.

Education and documentation: Create infographics, diagrams, and illustrated guides. Use quality: high for any image that includes text labels or structured layouts.

E-commerce and product visualization: Place a product in a new context or environment by using it as a reference image and describing the target scene in the prompt.